Waiting for confirmation: a series about mempool and relay policy

Copies of all published parts of our weekly series on transaction relay, mempool inclusion, and mining transaction selection—including why Bitcoin Core has a more restrictive policy than allowed by consensus and how wallets can use that policy most effectively.

- Why do we have a mempool?

- Incentives

- Bidding for block space

- Feerate estimation

- Policy for Protection of Node Resources

- Policy Consistency

- Network Resources

- Policy as an Interface

- Policy proposals

- Get Involved

Why do we have a mempool?

Originally published in Newsletter #251

Many nodes on the Bitcoin network store unconfirmed transactions in an in-memory pool, or mempool. This cache is an important resource for each node and enables the peer-to-peer transaction relay network.

Nodes that participate in transaction relay download and validate blocks gradually rather than in spikes. Every ~10 minutes when a block is found, nodes without a mempool experience a bandwidth spike, followed by a computation-intensive period validating each transaction. On the other hand, nodes with a mempool have typically already seen all of the block’s transactions and store them in their mempools. With compact block relay, these nodes just download a block header along with shortids, and then reconstruct the block using transactions in their mempools. The amount of data used to relay compact blocks is tiny compared to the size of the block. Validating the transactions is also much faster: the node has already verified (and cached) signatures and scripts, calculated the timelock requirements, and loaded relevant UTXOs from disk if necessary. The node can also forward the block onto its other peers promptly, dramatically increasing network-wide block propagation speed and thus reducing the risk of stale blocks.

Mempools can also be used to build an independent fee estimator. The market for block space is a fee-based auction, and keeping a mempool allows users to have a better sense of what others are bidding and what bids have been successful in the past.

However, there is no such thing as “the mempool”—each node may receive different transactions. Submitting a transaction to one node does not necessarily mean that it has made its way to miners. Some users find this uncertainty frustrating, and wonder, “why don’t we just submit transactions directly to miners?”

Consider a Bitcoin network in which all transactions are sent directly from users to miners. One could censor and surveil financial activity by requiring the small number of entities to log the IP addresses corresponding to each transaction, and refuse to accept any transactions matching a particular pattern. This type of Bitcoin may be more convenient at times, but would be missing a few of Bitcoin’s most valued properties.

Bitcoin’s censorship-resistance and privacy come from its peer-to-peer network. In order to relay a transaction, each node may connect to some anonymous set of peers, each of which could be a miner or somebody connected to a miner. This method helps obfuscate which node a transaction originates from as well as which node may be responsible for confirming it. Someone wishing to censor particular entities may target miners, popular exchanges, or other centralized submission services, but it would be difficult to block anything completely.

The general availability of unconfirmed transactions also helps minimize the entrance cost of becoming a block producer—someone who is dissatisfied with the transactions being selected (or excluded) may start mining immediately. Treating each node as an equal candidate for transaction broadcast avoids giving any miner privileged access to transactions and their fees.

In summary, a mempool is an extremely useful cache that allows nodes to distribute the costs of block download and validation over time, and gives users access to better fee estimation. At a network level, mempools support a distributed transaction and block relay network. All of these benefits are most pronounced when everybody sees all transactions before miners include them in blocks - just like any cache, a mempool is most useful when it is “hot” and must be limited in size to fit in memory. Next week’s section will explore the use of incentive compatibility as a metric for keeping the most useful transactions in mempools.

Incentives

Originally published in Newsletter #252

Last week’s post discussed mempool as a cache of unconfirmed transactions that provides a decentralized method for users to send transactions to miners. However, miners are not obligated to confirm those transactions; a block with just a coinbase transaction is valid by consensus rules. Users can incentivize miners to include their transactions by increasing total input value without changing total output value, allowing miners to claim the difference as transaction fees.

Although transaction fees are common in traditional financial systems, new Bitcoin users are often surprised to find that on-chain fees are paid not in proportion to the transaction amount but by the weight of the transaction. Block space, instead of liquidity, is the limiting factor. Feerate is typically denominated in satoshis per virtual byte.

Consensus rules limit the space used by transactions in each block. This limit keeps block propagation times low relative to the block interval, reducing the risk of stale blocks. It also helps restrict the growth of the block chain and UTXO set, both of which directly contribute to the cost of bootstrapping and maintaining a full node.

As such, as part of their role as a cache of unconfirmed transactions, mempools also facilitate a public auction for inelastic block space: when functioning properly, the auction operates on free-market principles, i.e., priority is based purely on fees rather than relationships with miners.

Maximizing fees when selecting transactions for a block (which has limits on total weight and signature operations) is an NP-hard problem. This problem is further complicated by transaction relationships: mining a high-feerate transaction may require mining its low-feerate parent. Put another way, mining a low-feerate transaction may open up the opportunity to mine its high-feerate child.

The Bitcoin Core mempool computes the feerate for each entry and its ancestors (called ancestor feerate), caches that result, and uses a greedy block template building algorithm. It sorts the mempool in ancestor score order (the minimum of ancestor feerate and individual feerate) and selects ancestor packages in that order, updating the remaining transactions’ ancestor fee and weight information as it goes. This algorithm offers a balance between performance and profitability, and does not necessarily produce optimal results. Its efficacy can be boosted by restricting the size of transactions and ancestor packages—Bitcoin Core sets those limits to 400,000 and 404,000 weight units, respectively.

Similarly, a descendant score is calculated that is used when selecting packages to evict from the mempool, as it would be disadvantageous to evict a low-feerate transaction that has a high-feerate child.

Mempool validation also uses fees and feerate when dealing with transactions that spend the same input(s), i.e. double-spends or conflicting transactions. Instead of always keeping the first transaction it sees, nodes use a set of rules to determine which transaction is the more incentive compatible to keep. This behavior is known as Replace by Fee.

It is intuitive that miners would maximize fees, but why should a non-mining node operator implement these policies? As mentioned in last week’s post, the utility of a non-mining node’s mempool is directly related to its similarity to miners’ mempools. As such, even if a node operator never intends to produce a block using the contents of its mempool, they have an interest in keeping the most incentive-compatible transactions.

While there are no consensus rules requiring transactions to pay fees, fees and feerate play an important role in the Bitcoin network as a “fair” way to resolve competition for block space. Miners use feerate to assess acceptance, eviction, and conflicts, while non-mining nodes mirror those behaviors in order to maximize the utility of their mempools.

The scarcity of block space exerts a downward pressure on the size of transactions and encourages developers to be more efficient in transaction construction. Next week’s post will explore practical strategies and techniques for minimizing fees in on-chain transactions.

Bidding for block space

Originally published in Newsletter #253

Last week we mentioned that transactions pay fees for the used blockspace rather than the transferred amount, and established that miners optimize their transaction selection to maximize collected fees. It follows that only those transactions get confirmed that reside in the top of the mempool when a block is found. In this post, we will discuss practical strategies to get the most for our fees. Let’s assume we have a decent source of feerate estimates—we will talk more about feerate estimation in next week’s article.

While constructing transactions, some parts of the transaction are more flexible than others. Every transaction requires the header fields, the recipient outputs are determined by the payments being made, and most transactions require a change output. Both sender and receiver should prefer blockspace-efficient output types to reduce the future cost of spending their transaction outputs, but it’s during the input selection that there is the most room to change the final composition and weight of the transaction. As transactions compete by feerate [fee/weight], a lighter transaction requires a lower fee to reach the same feerate.

Some wallets, such as the Bitcoin Core wallet, try to combine inputs such that they avoid needing a change output altogether. Avoiding change saves the weight of an output now, but also saves the future cost of spending the change output later. Unfortunately, such input combinations will only seldom be available unless the wallet sports a large UTXO pool with a broad variety of amounts.

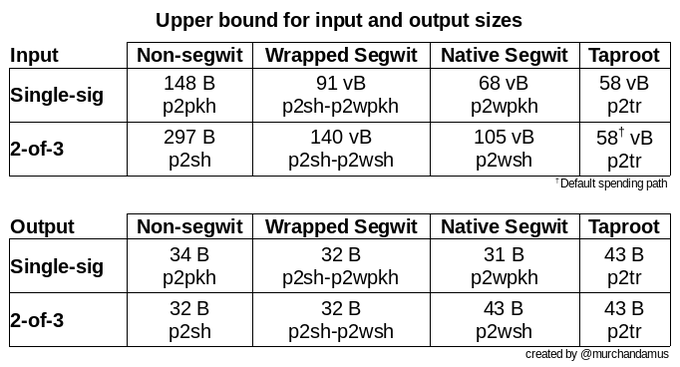

Modern output types are more blockspace-efficient than older output types. E.g. spending a P2TR input incurs less than 2/5ths of a P2PKH input’s weight. (Try it with our transaction size calculator!) For multisig wallets, the recently finalized MuSig2 schema and FROST protocol chalk out huge cost savings by permitting multisig functionality to be encoded in what looks like a single-sig input. Especially in times when blockspace demand goes through the roof, a wallet using modern output types by itself translates to big cost savings.

Smart wallets change their selection strategy on the basis of the feerate: at high feerates they use few inputs and modern input types to achieve the lowest possible weight for the input set. Always selecting the lightest input set would locally minimize the cost of the current transaction, but also grind a wallet’s UTXO pool into small fragments. This could set the user up for transactions with huge input sets at high feerates later. Therefore, it is prescient for wallets to also select more and heavier inputs at low feerates to opportunistically consolidate funds into fewer modern outputs in anticipation of later blockspace demand spikes.

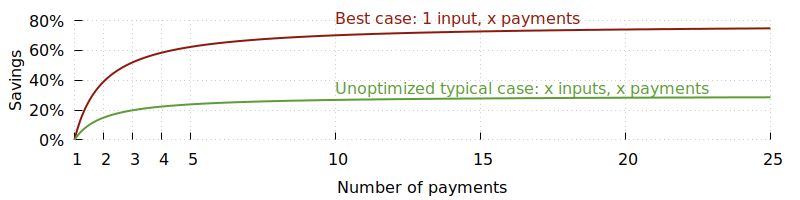

High-volume wallets often batch multiple payments into a single transaction to reduce the transaction weight per payment. Instead of incurring the overhead of the header bytes and the change output for each payment, they only incur the overhead cost once shared across all payments. Even just batching a few payments quickly reduces cost per payment.

Still, even while many wallets estimate feerates erring on overpayment, on a slow block or surge in transaction submissions, transactions sometimes sit unconfirmed longer than planned. In those cases, either the sender or receiver may want to reprioritize the transaction.

Users generally have two tools at their disposal to increase their transaction’s priority, child pays for parent (CPFP) and replace by fee (RBF). In CPFP, a user spends their transaction output to create a high-feerate child transaction. As described in last week’s post, miners are incentivized to pick the parent into the block in order to include the fee-rich child. CPFP is available to any user that gets paid by the transaction, so either receiver or sender (if they created a change output) can make use of it.

In RBF, the sender authors a higher-feerate replacement of the original transaction. The replacement transaction must reuse at least one input from the original transaction to ensure a conflict with the original and that only one of the two transactions can be included in the blockchain. Usually this replacement includes the payments from the original transaction, but the sender could also redirect the funds in the replacement transaction, or combine multiple transactions’ payments into one upon replacement. As described in last week’s post, nodes evict the original transaction in favor of the more incentive-compatible replacement transaction.

While both demand for and production of blockspace are outside our control, there are many techniques wallets can use to bid for blockspace effectively. Wallets can save fees by creating lighter transactions through eliminating the change output, spending native segwit outputs, and defragmenting their UTXO pool during low feerate environments. Wallets that support CPFP and RBF can also start with a conservative feerate and then update the transaction’s priority using CPFP or RBF if needed.

Feerate estimation

Originally published in Newsletter #254

Last week, we explored techniques for minimizing the fees paid on a transaction given a feerate. But what should that feerate be? Ideally, as low as possible to save money, but high enough to secure a spot in a block suitable for the user’s time preference.

The goal of fee(rate) estimation is to translate a target timeframe for confirmation to a minimal feerate the transaction should pay.

One complication of fee estimation is the irregularity of block space production. Let’s say a user needs to pay a merchant within one hour to receive their goods. The user may expect a block to be mined every 10 minutes, and thus aim for a spot within the next 6 blocks. However, it’s entirely possible for one block to take 45 minutes to be found. Fee estimators must translate between a user’s desired urgency or timeframe (something like “I want this to confirm by the end of the work day”) and a supply of block space (a number of blocks). Many fee estimators address this challenge by denominating confirmation targets in the number of blocks in addition to time.

With no information about transactions prior to their confirmation, one can build a naive fee estimator that uses historical data about what transaction feerates tend to land in blocks. As this estimator is blind to the transactions awaiting confirmation in mempools, it would become very inaccurate during unexpected fluctuations in block space demand and the occasional long block interval. Its other weakness is its reliance on information controlled wholly by miners, who would be able to drive feerates up by including fake high-feerate transactions in their blocks.

Fortunately, the market for block space is not a blind auction. We

mentioned in our first post that keeping a mempool and participating

in the peer-to-peer transaction relay network allows a node to see

what users are bidding. The Bitcoin Core fee estimator also uses

historical data to calculate the likelihood of a transaction at

feerate f confirming within n blocks, but specifically tracks the

height at which the node first sees a transaction and when it

confirms. This method works around activity that happens outside the

public fee market by ignoring it. If miners include artificially

high-feerate transactions in their own blocks, this fee estimator

isn’t skewed because it only uses data from transactions that were

publicly relayed prior to confirmation.

We also have insights into the way transactions are selected for blocks.

In a previous post, we mentioned that nodes emulate miner

policies in order to keep incentive-compatible transactions in their

mempools. Expanding on this idea, instead of looking only at past

data, we could build a fee estimator that simulates what a miner would

do. To find out what feerate a transaction would need to confirm in

the next n blocks, the fee estimator could use the block assembly

algorithm to project the next n block templates from its mempool

and calculate the feerate that would beat the last transaction(s) that

make it into block n.

Clearly, the efficacy of this fee estimator’s approach depends on the similarity between the contents of its mempool and the miners’, which can never be guaranteed. It is also blind to transactions a miner might include due to exterior motivations, e.g. transactions that belong to the miner or paid out-of-band fees to be confirmed. The projection must also account for additional transaction broadcasts between now and when the projected blocks are found. It can do so by decreasing the size of its projected blocks to account for other transactions – but by how much?

This question once again highlights the utility of historical data. An intelligent model may be able to incorporate patterns of activity and account for external events that influence feerates such as typical business hours, a company’s scheduled UTXO consolidation, and activity in response to changes in Bitcoin’s trading price. The problem of forecasting block space demand remains ripe for exploration, and is likely to always have room for innovation.

Fee estimation is a multi-faceted and difficult problem. Bad fee estimation can waste funds by overpaying fees, add friction to the use of Bitcoin for payments, and cause L2 users to lose money by missing a window within which a timelocked UTXO had an alternate spending path. Good fee estimation allows users to clearly and precisely communicate transaction urgency to miners, and CPFP and RBF allow users to update their bids if initial estimates undershoot. Incentive-compatible mempool policies, combined with well-designed fee estimation tools and wallets, enable users to participate in an efficient, public auction for block space.

Fee estimators typically never return anything below 1sat/vB, regardless of how long the time horizon is or how few transactions are pending confirmation. Many consider 1sat/vB as the de facto floor feerate in the Bitcoin network, due to the fact that most nodes on the network (including miners) never accept anything below that feerate, regardless of how empty their mempools are. Next week’s post will explore this node policy and another motivation for utilizing feerate in transaction relay: protection from resource exhaustion.

Policy for Protection of Node Resources

Originally published in Newsletter #255

We started off our series by stating that much of Bitcoin’s privacy and censorship resistance stems from the decentralized nature of the network. The practice of users running their own nodes reduces central points of failure, surveillance, and censorship. It follows that one primary design goal for Bitcoin node software is high accessibility of running a node. Requiring each Bitcoin user to purchase expensive hardware, use a specific operating system, or spend hundreds of dollars per month in operational costs would very likely reduce the number of nodes on the network.

Additionally, a node on the Bitcoin network is a computer with internet connections to unknown entities that may launch a Denial of Service (DoS) attack by crafting messages that cause the node to run out of memory and crash, or spend its computational resources and bandwidth on meaningless data instead of accepting new blocks. As these entities are anonymous by design, nodes cannot predetermine whether a peer will be honest or malicious before connecting, and cannot effectively ban them even after an attack is observed. Thus, it is not just an ideal to implement policies that protect against DoS and limit the cost of running a full node, but an imperative.

General DoS protections are built into node implementations to prevent resource exhaustion. For example, if a Bitcoin Core node receives many messages from a single peer, it only processes the first one and adds the rest to a work queue to be processed after other peers’ messages. A node also typically first downloads a block header and verifies its Proof of Work (PoW) prior to downloading and validating the rest of the block. Thus, any attacker wishing to exhaust this node’s resources through block relay must first spend a disproportionately high amount of their own resources computing a valid PoW. The asymmetry between the huge cost for PoW calculation and the trivial cost of verification provides a natural way to build DoS resistance into block relay. This property does not extend to unconfirmed transaction relay.

General DoS protections don’t provide enough attack resistance to allow a node’s consensus engine to be exposed to input from the peer-to-peer network. An attacker attempting to craft a maximally computationally-intensive, consensus-valid transaction may send one like the 1MB “megatransaction” in block #364292, which took an abnormally long time to validate due to signature verification and quadratic sighashing. An attacker may also make all but the last signature valid, causing the node to spend minutes on their transaction, only to find that it is garbage. During that time, the node would delay processing a new block. One can imagine this type of attack being targeted at competing miners to gain a “head start” on the next block.

In an effort to avoid working on very computationally expensive transactions, Bitcoin Core nodes impose a maximum standard size and a maximum number of signature operations (or “sigops”) on each transaction, more restrictive than the block consensus limit. Bitcoin Core nodes also enforce limits on both ancestor and descendant package sizes, making block template production and eviction algorithms more effective and restricting the computational complexity of mempool insertion and deletion which require updating a transaction’s ancestor and descendant sets. While this means some legitimate transactions may not be accepted or relayed, those transactions are expected to be rare.

These rules are examples of transaction relay policy, a set of validation rules in addition to consensus which nodes apply to unconfirmed transactions.

By default, Bitcoin Core nodes do not accept transactions below the 1sat/vB minimum relay feerate (“minrelaytxfee”), do not verify any signatures before checking this requirement, and do not forward transactions unless they are accepted to their mempools. In a sense, this feerate rule sets a minimum “price” for network validation and relay. A non-mining node doesn’t ever receive fees – they are only paid to the miner who confirms the transaction. However, fees represent a cost to the attacker. Somebody who “wastes” network resources by sending an extremely high amount of transactions eventually runs out of money to pay the fees.

The Replace by Fee policy implemented by Bitcoin Core requires that the replacement transaction pay a higher feerate than each transaction it directly conflicts with, but also requires that it pay a higher total fee than all of the transactions it displaces. The additional fees divided by the replacement transaction’s virtual size must be at least 1sat/vB. In other words, regardless of the feerates of the original and replacement transactions, the new transaction must pay “new” fees to cover the cost of its own bandwidth at 1sat/vB. This fee policy is not primarily concerned with incentive compatibility. Rather, this incurs a minimum cost for repeated transaction replacements to curb bandwidth-wasting attacks, e.g. one that adds just 1 additional satoshi to each replacement.

A node that fully validates blocks and transactions requires resources including memory, computational resources, and network bandwidth. We must keep resource requirements low in order to make running a node accessible and to defend the node against exploitation. General DoS protections are not enough, so nodes apply transaction relay policies in addition to consensus rules when validating unconfirmed transactions. However, as policy is not consensus, two nodes may have different policies but still agree on the current chain state. Next week’s post will discuss policy as an individual choice.

Policy Consistency

Originally published in Newsletter #256

Last week’s post introduced policy, a set of transaction validation rules applied in addition to consensus rules. These rules are not applied to transactions in blocks, so a node can still stay in consensus even if its policy differs from that of its peers. Just like a node operator may decide to not participate in transaction relay, they are also free to choose any policy, up to none at all (exposing their node to the DoS risk). This means we cannot assume complete homogeneity of mempool policies across the network. However, in order for a user’s transaction to be received by a miner, it must travel through a path of nodes that all accept it into their mempool – dissimilarity of policy between nodes directly affects transaction relay functionality.

As an extreme example of policy differences between nodes, imagine a

situation in which each node operator chose a random nVersion and

only accepted transactions with that nVersion. As most peer-to-peer

relationships would have incompatible policies, transactions would not

propagate to miners.

On the other end of the spectrum, identical policies across the network help converge mempool contents. A network with matching mempools relays transactions the most smoothly, and is also ideal for fee estimation and compact block relay as mentioned in previous posts.

Given the complexity of mempool validation and the difficulties that

arise from policy disparities, Bitcoin Core has historically been

conservative with the configurability of

policies. While users are able to easily tweak the way sigops are

counted (bytespersigop) and limit the amount of data embedded

in OP_RETURN outputs (datacarriersize and datacarrier), they

cannot opt out of the 400,000 weight-unit maximum standard weight or apply a

different set of fee-related RBF rules without changing the source

code.

Some of Bitcoin Core’s policy configuration options exist to

accommodate the difference in node operating environments and purposes

for running a node. For example, a miner’s hardware resources and

purpose for keeping a mempool differ from a day-to-day user running a

lightweight node on their laptop or Raspberry Pi. A miner may opt to

increase their mempool capacity (maxmempool) or expiration timeline

(mempoolexpiry) to store low feerate transactions during peak

traffic, and then mine them later when traffic dies down. Websites

providing visualizations, archives, and network statistics may run

multiple nodes to collect as much data as possible and also display

default mempool behavior.

On an individual node, the choice of mempool capacity affects the availability of fee-bumping tools. When the mempool minimum feerates rise due to transaction submissions exceeding the default mempool size, transactions purged from the “bottom” of the mempool and new ones that are below this feerate can no longer be fee-bumped using CPFP.

On the other hand, since the inputs used by the purged transactions are no longer spent by any transactions in the mempool, it may be possible to fee-bump via RBF when it wasn’t before. The new transaction isn’t actually replacing anything in the node’s mempool, so it doesn’t need to consider the usual RBF rules. However, nodes that haven’t evicted the original transaction (because they have a larger mempool capacity) treat the new transaction as a proposed replacement and require it to abide by the RBF rules. If the purged transaction was not signaling BIP125 replaceability, or the new transaction’s fee does not meet RBF requirements despite being high feerate, the miner may not accept their new transaction. Wallets must handle purged transactions carefully: the transaction’s outputs cannot be considered available for spending, but the inputs are similarly unavailable to reuse.

At quick glance, it may seem that a node with larger mempool capacity makes CPFP more useful and RBF less useful. However, transaction relay is subject to emergent network behavior and there might not be a path of nodes accepting the CPFP from the user to the miner. Nodes typically only forward transactions once upon accepting it to their mempool and ignore announcements of transactions that already exist in their mempools—nodes that store more transactions act as blackholes when those transactions are rebroadcast to them. Unless the entire network increases their mempool capacities – which would be a sign to change the default value – users should expect little benefit from increasing the capacity of their own mempools. The mempool minimum feerate set by default mempools limits the utility of using CPFP during high-traffic times. A user who managed to submit a CPFP transaction to their own increased-size mempool might fail to notice that the transaction did not propagate to anyone else.

The transaction relay network is composed of individual nodes which dynamically join and leave the network, each of whom must protect themselves against exploitation. As such, transaction relay can only be a best-effort and we cannot guarantee that every node learns about every unconfirmed transaction. At the same time, the Bitcoin network performs best if nodes converge on one set of transaction relay policies that makes mempools as homogeneous as possible. The next post will explore what policies have been adopted in order to fit the network’s interests as a whole.

Network Resources

Originally published in Newsletter #257

A previous post discussed protecting node resources, which may be unique to each node and thus sometimes configurable. We also made our case for why it is best to converge on one policy, but what should be part of that policy? This post will discuss the concept of network-wide resources, critical to things like scalability, upgradeability and accessibility of bootstrapping and maintaining a full node.

As discussed in previous posts, many of the Bitcoin network’s ideological goals are embodied in its distributed structure. Bitcoin’s peer-to-peer nature allows the rules of the network to emerge from rough consensus of the individual node operators’ choices and curbs attempts to acquire undue influence in the network. Those rules are then enforced by each node individually validating every transaction. A diverse and healthy node population requires that the cost of operating a node is kept low. It is hard to scale any project with a global audience, but doing so without sacrificing decentralization is akin to fighting with one hand tied to your back. The Bitcoin project attempts this balancing act by being fiercely protective of its shared network resources: the UTXO set, the data footprint of the blockchain and the computational effort required to process it, and upgrade hooks to evolve the Bitcoin protocol.

There is no need to reiterate the entire blocksize war to realize that a limit

on blockchain growth is necessary to keep it affordable to run your own node.

However, blockchain growth is also dissuaded at the policy level by the

minRelayTxFee of 1 sat/vbyte, ensuring a minimum cost to express some of the

“unbounded demand for highly-replicated perpetual storage”.

Originally, the network state was tracked by keeping all transactions that still had unspent outputs. This much bigger portion of the blockchain got reduced significantly with the introduction of the UTXO set as the means of keeping track of funds. Since then, the UTXO set has been a central data structure. Especially during IBD, but also generally, UTXO lookups represent a major portion of all memory accesses of a node. Bitcoin Core already uses a manually optimized data structure for the UTXO cache, but the size of the UTXO set determines how much of it cannot fit in a node’s cache. A larger UTXO set means more cache misses which slows down block validation, IBD, and transaction validation speed. The dust limit is an example of a policy that restricts the creation of UTXOs, specifically curbing UTXOs that might never get spent because their amount falls short of the cost for spending them. Even so, “dust storms” with thousands of transactions occurred as recently as 2020.

When it became popular to use bare multisig outputs to publish data onto the

blockchain, the definition of standard transactions was amended to permit a

single OP_RETURN output as an alternative. People realized that it would be

impossible to prevent users from publishing data on the blockchain, but at

least such data would not need to live in the UTXO set forever when published

in outputs that could never be spent. Bitcoin Core 0.13.0 introduced a start-up

option -permitbaremultisig that users may toggle to reject unconfirmed

transactions with bare multisig outputs.

While the consensus rules allow output scripts to be freeform, only a few well-understood patterns are relayed by Bitcoin Core nodes. This makes it easier to reason about many concerns in the network, including validation costs and protocol upgrade mechanisms. For example, an input script that contains op-codes, a P2SH input with more than 15 signatures, or a P2WSH input whose witness stack has more than 100 items each would make a transaction non-standard. (Check out this policy overview for more examples of policies and their motivations.)

Finally, the Bitcoin protocol is a living software project that will need to keep evolving to address future challenges and user needs. To that end, there are a number of upgrade hooks deliberately left consensus valid but unused, such as the annex, taproot leaf versions, witness versions, OP_SUCCESS, and a number of no-op opcodes. However, just like attacks are hindered by the lack of central points of failure, network-wide software upgrades involve a coordinated effort between tens of thousands of independent node operators. Nodes will not relay transactions that make use of any reserved upgrade hooks until their meaning has been defined. This discouragement is meant to dissuade applications from independently creating conflicting standards, which would make it impossible to adopt one application’s standard into consensus without invalidating another’s. Also, when a consensus change does happen, nodes that do not upgrade immediately—and thus do not know the new consensus rules—cannot be “tricked” into accepting a now-invalid transaction into their mempools. The proactive discouragement helps nodes be forward-compatible and enables the network to safely upgrade consensus rules without requiring a completely synchronized software update.

We can see that using policy to protect shared network resources aids in protecting the network’s characteristics, and keeps paths for future protocol development open. Meanwhile, we are seeing how the friction of growing the network against a strictly limited blockweight has been driving adoption of best practices, good technical design, and innovation: next week’s post will explore mempool policy as an interface for second-layer protocols and smart contract systems.

Policy as an Interface

Originally published in Newsletter #258

So far in this series, we have explored the motivations and challenges associated with decentralized transaction relay, leading to a local and global need for transaction validation rules more restrictive than consensus. Since transaction relay policy changes to Bitcoin Core can impact whether an application’s transactions relay, they require socialization with the wider Bitcoin community prior to consideration. Similarly, applications and second layer protocols that utilize transaction relay must be designed with policy rules in mind to avoid creating transactions that are rejected.

Contracting protocols are even more intimately dependent on policies related to prioritization because enforceability on-chain depends on being able to get transactions confirmed quickly. In adversarial environments, cheating counterparties may have an interest in delaying a transaction’s confirmation, so we must also think about how quirks in the transaction relay policy interface can be used against a user.

Lightning Network transactions adhere to the standardness rules

mentioned in earlier posts. For example, the peer-to-peer protocol specifies a

dust_limit_satoshis in its

open_channel message to specify a dust threshold.

Since a transaction containing an output with a

value lower than the dust threshold would not relay due to nodes’ dust

limits, those payments are considered “not enforceable on-chain” and

trimmed from commitment transactions.

Contracting protocols often use timelocked spending paths to give each participant the opportunity to contest the state published on-chain. If the affected user cannot get a transaction confirmed within that frame of time, they may suffer loss of funds. This makes fees extremely important as the primary mechanism for boosting confirmation priority, but also more challenging. Feerate estimation is complicated by the fact that transactions will be broadcast at some unknown later time, nodes often operate as thin clients, and some fee-bumping options are unavailable. For example, if an LN channel participant goes offline, the other party may unilaterally broadcast a presigned commitment transaction to settle the distribution of their shared funds on-chain. Neither party can unilaterally spend the shared UTXO, so when one party is offline, signing a replacement transaction to fee-bump the commitment transaction is not possible. Instead, LN commitment transactions may include anchor outputs for channel participants to attach a fee-bumping child at broadcast time.

However, this fee-bumping method also has limitations. As mentioned in a previous post, adding a CPFP transaction is not effective if mempool minimum feerates rise higher than the commitment transaction’s feerate, so they must still be signed with a slightly overestimated feerate in case mempool minimum feerates rise in the future. Additionally, the development of anchor outputs included a number of considerations for the fact that one party may have an interest in delaying confirmation. For example, a party (Alice) may broadcast their own commitment transaction to the network prior to going offline. If this commitment transaction’s feerate is too low for immediate confirmation and if Alice’s counterparty (Bob) doesn’t receive her transaction, he may be confused when his broadcasts of his version of the commitment transaction aren’t successfully relayed. Each commitment transaction has two anchor outputs so that either party may CPFP any of the commitment transactions, e.g. Bob may try to blindly broadcast a CPFP fee bump of Alice’s version of the commitment transaction even if he isn’t sure that she previously broadcast her version. Each anchor output is assigned a small value above the dust threshold and claimable by anyone after some time to avoid bloating the UTXO set.

However, guaranteeing each party’s ability to CPFP a transaction is more complicated than giving each party an anchor output. As mentioned in a previous post, Bitcoin Core limits the number and total size of descendant transactions that can be attached to an unconfirmed transaction as a DoS protection. Since each counterparty has the ability to attach descendants to the shared transaction, one could block the other’s CPFP transaction from relaying by exhausting those limits. The presence of these descendants consequently “pins” the commitment transaction to its low-priority status in mempools.

To mitigate this potential attack, the LN anchor outputs proposal locks all non-anchor outputs with a relative timelock, preventing them from being spent while the transaction is unconfirmed, and Bitcoin Core’s descendant limit policy was modified to allow one extra descendant when this new descendant was small and had no other ancestors. This combination of changes to both protocols ensured that at least two participants in a shared transaction could make feerate adjustments at broadcast time, while not significantly increasing the transaction relay DoS attack surface.

CPFP prevention through domination of the descendant limit is an example of a pinning attack. Pinning attacks take advantage of limitations in mempool policy to prevent incentive-compatible transactions from entering mempools or getting confirmed. In this case, mempool policy has made a tradeoff between DoS-resistance and incentive compatibility. Some tradeoff must be made – a node should consider fee bumps but cannot process infinitely many descendants. CPFP carve out refines this tradeoff for a specific use case.

Beyond exhausting the descendant limit, there are other pinning attacks that altogether prevent use of RBF, make RBF prohibitively expensive, or leverage RBF to delay confirmation of an ANYONECANPAY transaction. Pinning is only an issue in scenarios where multiple parties collaborate in creating a transaction or when there is otherwise room for an untrusted party to interact with the transaction. Minimizing a transaction’s exposure to untrusted parties is generally a good way to avoid pinning.

These points of friction highlight not just the importance of policy as an interface for applications and protocols in the Bitcoin ecosystem, but where it needs to improve. Next week’s post will discuss policy proposals and open questions.

Policy proposals

Originally published in Newsletter #259

Last week’s post described anchor outputs and CPFP carve out, ensuring either channel party can fee-bump their shared commitment transactions without requiring collaboration. This approach still contains a few drawbacks: channel funds are tied up to create anchor outputs, commitment transaction feerates typically overpay to ensure they meet mempool minimum feerates, and CPFP carve out only allows one extra descendant. Anchor outputs cannot ensure the same ability to fee-bump for transactions shared between more than two parties, such as coinjoins or multi-party contracting protocols. This post explores current efforts to address these and other limitations.

Package relay includes P2P protocol and policy changes to enable the transport and validation of groups of transactions. It would allow a commitment transaction to be fee-bumped by a child even if the commitment transaction does not meet a mempool’s minimum feerate. Additionally, Package RBF would allow the fee-bumping child to pay for replacing transactions its parent conflicts with. Package relay is designed to remove a general limitation at the base protocol layer. However, due to its utility in fee-bumping of shared transactions, it has also spawned a number of efforts to eliminate pinning for specific use cases. For example, Package RBF would allow commitment transactions to replace each other when broadcast with their respective fee-bumping children, removing the need for multiple anchor outputs on each commitment transaction.

A caveat is that existing RBF rules require the replacement transaction to pay a higher absolute fee than the aggregate fees paid by all to-be-replaced transactions. This rule helps prevent DoS through repeated replacements, but allows a malicious user to increase the cost to replace their transaction by attaching a child that is high fee but low feerate. This hinders the transaction from being mined by unfairly preventing its replacement by a high-feerate package, and is often referred to as “Rule 3 pinning.”

Developers have also proposed entirely different ways of adding fees

to presigned transactions. For example, signing inputs of the

transaction using SIGHASH_ANYONECANPAY | SIGHASH_ALL could allow the

transaction broadcaster to provide fees by appending additional inputs

to the transaction without changing the outputs. However, as RBF does

not have any rule requiring the replacement transaction to have a

higher “mining score” (i.e. would be selected for a block faster), an

attacker could pin these types of transactions by creating

replacements encumbered by low-feerate ancestors. What complicates

the accurate assessment of the mining score of transactions and

transaction packages is that the existing ancestor and descendant

limits are insufficient to bound the computational complexity of this

calculation. Any connected transactions can influence the

order in which transactions get picked into a block. A fully-connected

component, called a cluster, can be of any size given current

ancestor and descendant limits.

A long term solution to address some mempool deficiencies and RBF pinning attacks is to restructure the mempool data structure to track clusters instead of just ancestor and descendant sets. These clusters would be limited in size. A cluster limit would restrict the way users can spend unconfirmed UTXOs, but make it feasible to quickly linearize the entire mempool using the ancestor score-based mining algorithm, build block templates extremely quickly, and add a requirement that replacement transactions have a higher mining score than the to-be-replaced transaction(s).

Even so, it’s possible that no single set of policies can meet the wide range of needs and expectations for transaction relay. For example, while recipients of a batched payment transaction benefit from being able to spend their unconfirmed outputs, a relaxed descendant limit leaves room for pinning package RBF of a shared transaction through absolute fees. A proposal for v3 transaction relay policy was developed to allow contracting protocols to opt in to a more restrictive set of package limits. V3 transactions would only permit packages of size two (one parent and one child) and limit the weight of the child. These limits would mitigate RBF pinning through absolute fees, and offer some of the benefits of cluster mempool without requiring a mempool restructure.

Ephemeral Anchors builds upon the

properties of v3 transactions and package relay to improve anchor

outputs further. It exempts anchor outputs

belonging to a zero-fee v3 transaction from the dust limit, provided the anchor output is

spent by a fee-bumping child. Since the zero-fee transaction must be

fee-bumped by exactly one child (otherwise a miner would not be

incentivized to include it in a block), this anchor output is

“ephemeral” and would not become a part of the UTXO set. The ephemeral

anchor proposal implicitly prevents non-anchor outputs from being

spent while unconfirmed without 1 OP_CSV timelocks, since the only

allowed child must spend the anchor output.

It would also make LN symmetry feasible with CPFP as the fee

provisioning mechanism for channel closing transactions. It also makes

this mechanism available for transactions shared between more than two

participants. Developers have been using bitcoin-inquisition to deploy

Ephemeral Anchors and proposed soft forks to build and test these

multi-layer changes on a signet.

The pinning problems highlighted in this post, among others, spawned a

wealth of discussions and proposals to improve RBF

policy last year across mailing lists, pull requests,

social media, and in-person meetings. Developers proposed and

implemented solutions ranging from small amendments to a complete

revamp. The -mempoolfullrbf option, intended to address pinning

concerns and a discrepancy in BIP125 implementations, illuminated the

difficulty and importance of collaboration in transaction relay

policy. While a genuine effort had been made to engage the community

using typical means, including starting the bitcoin-dev mailing list

conversation a year in advance, it was clear that the existing

communication and decision-making methods had not produced the

intended result and needed refinement.

Decentralized decision-making is a challenging process, but necessary to support the diverse ecosystem of protocols and applications that use Bitcoin’s transaction relay network. Next week will be our final post in this series, in which we hope to encourage our readers to participate in and improve upon this process.

Get Involved

Originally published in Newsletter #260

We hope this series has given readers a better idea of what’s going on while they are waiting for confirmation. We started with a discussion about how some of the ideological values of Bitcoin translate to its structure and design goals. The distributed structure of the peer-to-peer network offers increased censorship resistance and privacy over a typical centralized model. An open transaction relay network helps everybody learn about transactions in blocks prior to confirmation, which improves block relay efficiency, makes joining as a new miner more accessible, and creates a public auction for block space. As the ideal network consists of many independent, anonymous entities running nodes, node software must be designed to protect against DoS and generally minimize operational costs.

Fees play an important role in the Bitcoin network as the “fair” way to resolve competition for block space. Mempools with transaction replacement and package-aware selection and eviction algorithms use incentive compatibility to measure the utility of storing a transaction, and enable RBF and CPFP as fee-bumping mechanisms for users. The combination of these mempool policies, wallets that construct transactions economically, and good feerate estimation create an efficient market for block space that benefits everybody.

Individual nodes also enforce transaction relay policy to protect themselves against resource exhaustion and express personal preference. At a network-wide level, standardness rules and other policies protect resources that are critical to scaling, accessibility of running a node, and ability to update consensus rules. As the vast majority of the network enforces these policies, they are an important part of the interface that Bitcoin applications and L2 protocols build upon. They also aren’t perfect. We described several policy-related proposals that address broad limitations and specific use cases such as pinning attacks on L2 settlement transactions.

We also highlighted that the ongoing evolution of network policies requires collaboration between developers working on protocols, applications, and wallets. As the Bitcoin ecosystem grows with respect to software, use cases, and users, a decentralized decision-making process becomes more necessary but also more challenging. Even as Bitcoin adoption grows, the system emerges from the concerns and efforts of its stakeholders – there is no company in charge of gathering customer feedback and hiring engineers to build new protocol features or remove unused features. Stakeholders who wish to be part of the rough consensus of the network have different avenues of participating: informing themselves, surfacing questions and issues, involving themselves in the design of the network, or even contributing to the implementation of improvements. Next time your transaction is taking too long to confirm, you know what to do!